Research

Research Interests

Physics Data for Understanding ML

Leveraging the unique properties of physics datasets (access to controllable data generation and known data symmetry and structure) to probe the internal mechanisms of deep neural networks.

[1]

[1]

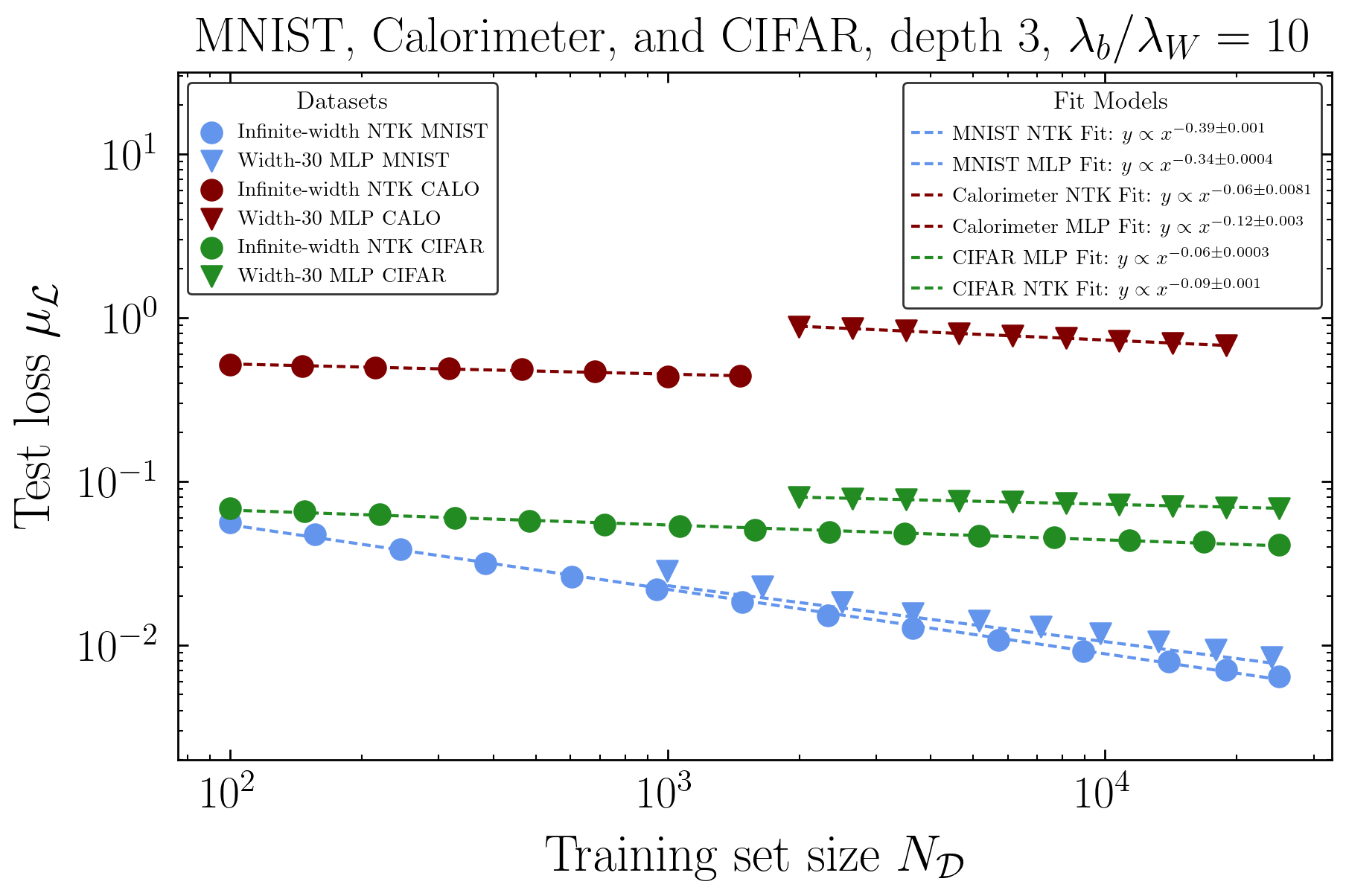

Physics-Inspired Scaling Laws

Using an effective field theory to predict how neural network ensembles behave, deriving scaling laws for uncertainty quantification without the need to train an ensemble.

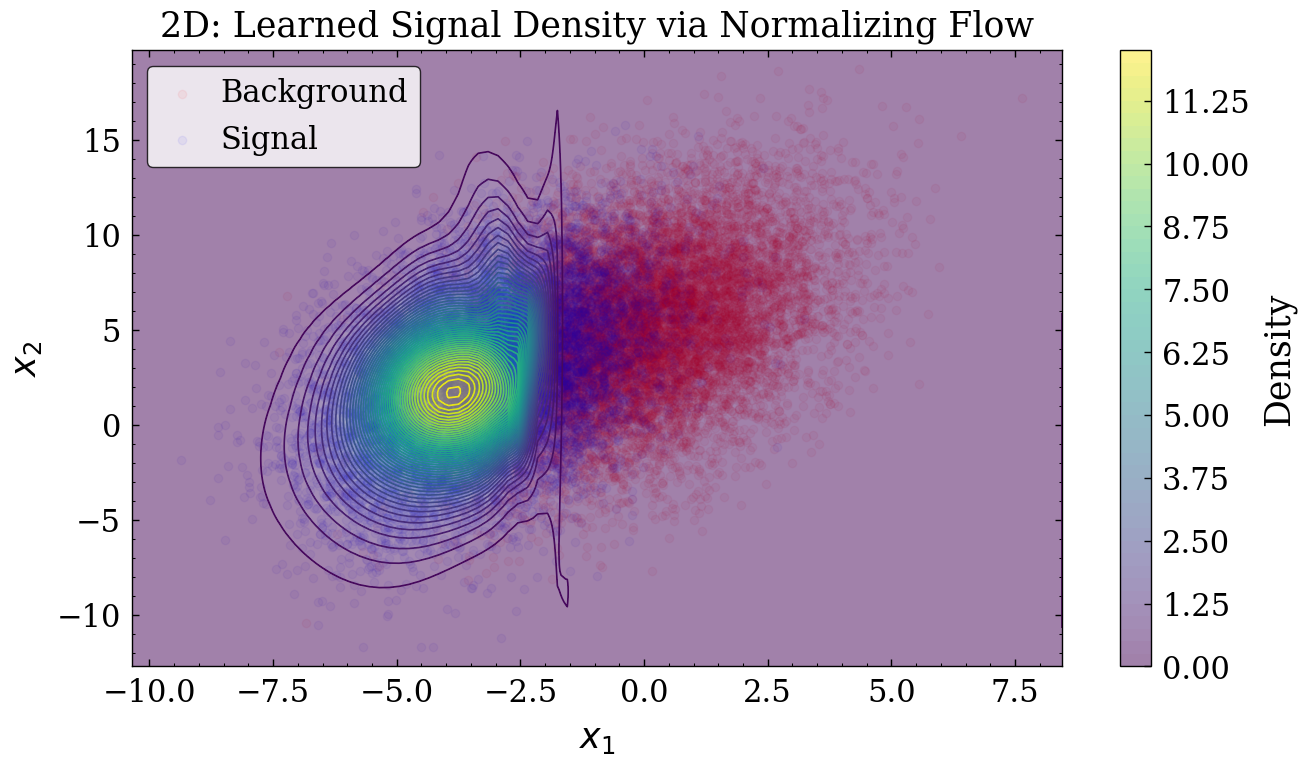

Automating Scientific Model Building

Developing ML methods with the goal of “theory inversion”. Parameter estimation with Uncertainty Quantification, Simulation Emulation, and Automating Theory Writing.

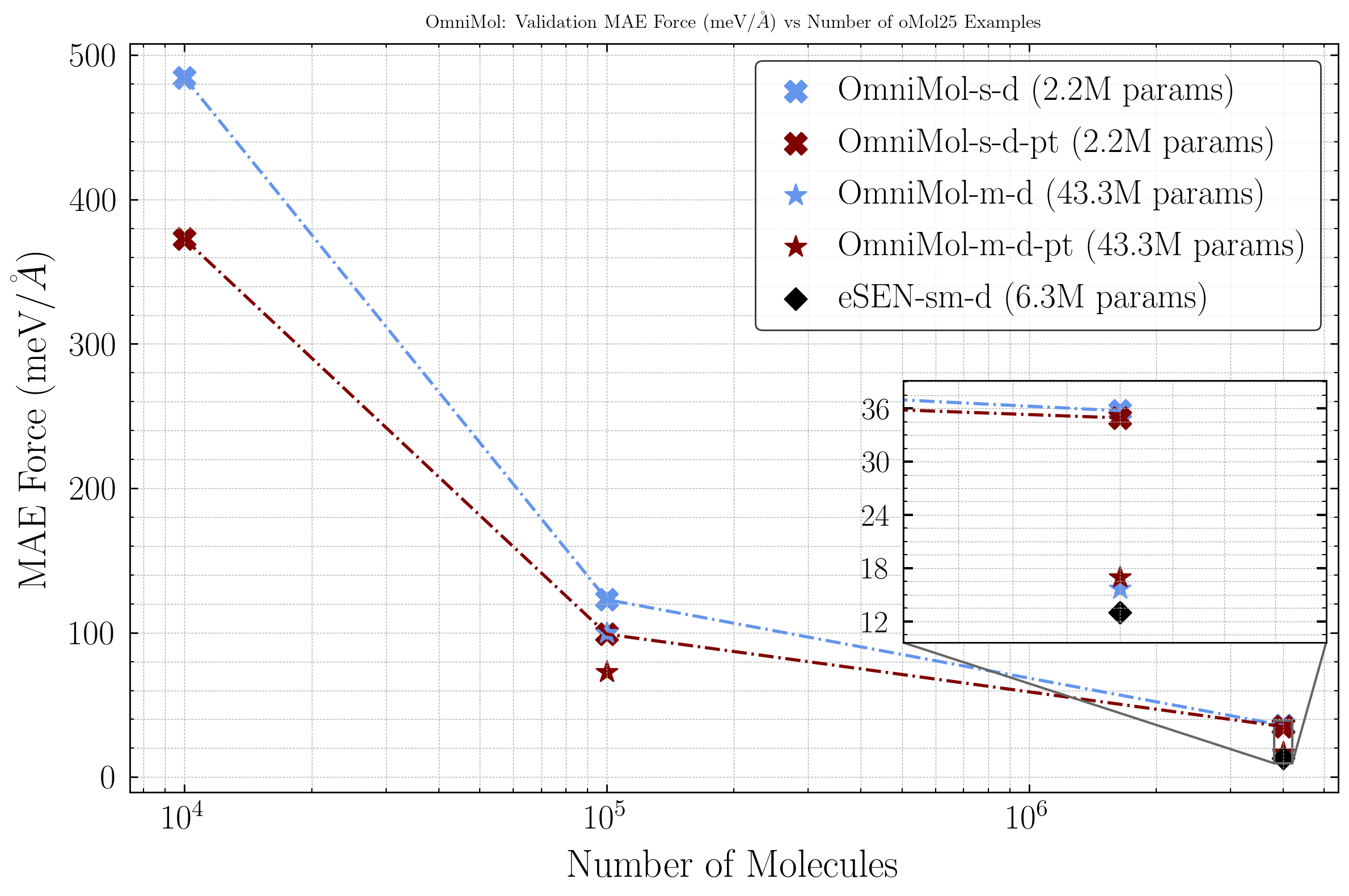

Cross-Domain Foundation Models

Building foundation models for scientific point clouds (irregular graphs!) that transfer knowledge across the domains of particle physics, cosmology, and molecular dynamics.

Select Papers

-

Machine Learning: Science and Technology, vol. 6, no. 3, 2025

-

arXiv preprint, 2025

-

arXiv preprint, 2026

-

arXiv preprint, 2026

-

NeurIPS 2025, Dataset and Competition Track

-

arXiv preprint, 2025

Select Talks & Posters

-

Invited Talk

1st Place Competition Milestone (Ensembles and Uncertainty Quantification)The Challenge of Handling Uncertainties in Fundamental Science @ NeurIPS 2024

-

Invited Talk

Contrastive Normalizing Flows for Uncertainty-Aware Parameter EstimationCERN 7th Inter-Experimental LHC Machine Learning Workshop, 2025

-

Poster

FAIR Universe HiggsML Uncertainty Dataset and CompetitionNeurIPS 2025, Dataset and Competition Track

-

Poster

Uncertainty Quantification from Scaling Laws in Deep Neural NetworksMachine Learning and the Physical Sciences Workshop @ NeurIPS 2024

-

Poster

Pre-Training For Science: A study on Foundation Model Training ObjectivesStanford Center of Decoding the Universe Forum, 2025